5.3.7: Measuring Crustal Deformation Directly Using Tectonic Geodesy

- Page ID

- 5922

As stated at the beginning of this chapter, Harry F. Reid based his elastic rebound theory on the displacement of survey benchmarks relative to one another. These benchmarks recorded the slow elastic deformation of the Earth’s crust prior to the 1906 San Francisco Earthquake. After the earthquake, the benchmarks snapped back, thereby giving an estimate of tectonic deformation near the San Andreas Fault independent of seismographs or of geological observations. Continued measurements of the benchmarks record the accumulation of strain toward the next earthquake. If geology records past earthquakes and seismographs record earthquakes as they happen, measuring the accumulation of tectonic strain says something about the earthquakes of the future.

Reid’s work means that a major contribution to the understanding of earthquakes can be made by a branch of civil engineering called surveying: land measurements of the distance between survey markers (trilateration), the horizontal angles between three markers (triangulation), and the difference in elevation between two survey markers (leveling).

Surveyors need to know about the effects of earthquakes on property boundaries. Surveying normally implies that the land stays where it is. But if an earthquake is accompanied by a ten-foot strike-slip offset on a fault crossing your property, would your property lines be offset, too? In Japan, where individual rice paddies and tea gardens have property boundaries that are hundreds of years old, property boundaries are offset. A land survey map of part of the island of Shikoku shows rice paddy property boundaries offset by a large earthquake fault in A.D. 1596.

The need to have accurate land surveys leads to the science of geodesy, the study of the shape and configuration of the Earth, a discipline that is part of civil engineering. Tectonic geodesy is the comparison of surveys done at different times to reveal the deformation of the crust between the times of the surveys. Following Reid’s discovery, the U.S. Coast and Geodetic Survey (now the National Geodetic Survey) took over the responsibility for tectonic geodesy, which led them into strong-motion seismology. For a time, the Coast and Geodetic Survey was the only federal agency with a mandate to study earthquakes (see Chapter 13).

After the great Alaska Earthquake of 1964, the USGS began to take an interest in tectonic geodesy as a way to study earthquakes. The leader in this effort was a young geophysicist named Jim Savage, who compared surveys before and after the earthquake to measure the crustal changes that accompanied the earthquake. The elevation changes were so large that along the Alaskan coastline, they could be easily seen without surveying instruments: sea level appeared to rise suddenly where the land went down, and it appeared to drop where the land went up. (Of course, sea level didn’t actually rise or fall, the land level changed.)

Up until the time of the earthquake, some scientists believed that the faults at deep-sea trenches were vertical. But Savage, working with geologist George Plafker, was able to use the differences in surveys to show that the great subduction-zone fault that had generated the earthquake dips gently northward, underneath the Alaskan landmass. Savage and Plafker then studied an even larger subduction-zone earthquake that had struck southern Chile in 1960 (Moment Magnitude Mw 9.5, the largest earthquake ever recorded) and showed that the earthquake fault in the Chilean deep-sea trench dipped beneath the South American continent. Seismologists, using newly established high-quality seismographs set up to monitor the testing of nuclear weapons, confirmed this by showing that earthquakes defined a landward-dipping zone that could be traced hundreds of miles beneath the surface. These became known as Wadati-Benioff zones, named for the seismologists who first described them. All these discoveries were building blocks in the emerging new theory of plate tectonics.

In 1980, Savage turned his attention to the Cascadia Subduction Zone off Washington and Oregon. This subduction zone was almost completely lacking in earthquakes and was thought to deform without earthquakes. But Savage, resurveying networks in the Puget Sound area and around Hanford Nuclear Reservation in eastern Washington, found that these areas were accumulating elastic strain, like areas in Alaska and the San Andreas Fault. Then John Adams, a young New Zealand geologist transplanted to the Geological Survey of Canada, remeasured leveling lines across the Coast Range and found that the coastal region was tilting eastward toward the Willamette Valley and Puget Sound, providing further evidence of elastic deformation. These geodetic observations were critical in convincing scientists that the Cascadia Subduction Zone was capable of large earthquakes, like the subduction zones off southern Alaska and Chile (see Chapter 4).

At the same time, the San Andreas fault system was being resurveyed along a spider web of line-length measurements between benchmarks on both sides of the fault. Resurveys were done once a year, more frequently later in the project. The results confirmed the elastic-rebound theory of Reid: a large number of benchmarks and the more frequent surveying campaigns added precision that had been lacking before. Not only could Savage and his coworkers determine how fast strain is building up on the San Andreas, Hayward, and Calaveras faults, but they were also able to determine how deep within the Earth’s crust the faults are locked.

After an earthquake in the San Fernando Valley near Los Angeles in 1971, Savage releveled survey lines that crossed the surface rupture. He was able to use the geodetic data to map the source fault dipping beneath the San Gabriel Mountains, just as he had done for the Alaskan Earthquake fault seven years before. The depiction of the source fault based on tectonic geodesy could be compared with the fault as illuminated by the distribution of aftershocks and by the surface geology of the fault scarp. This would be the wave of the future in the analysis of California earthquakes.

Still, the land survey techniques were too slow, too cumbersome, and too expensive. A major problem was that the baselines were short because the surveyors had to see from one benchmark to the other to make a measurement.

The solution to the problem came from space.

First, scientists from the National Aeronautical and Space Administration (NASA) discovered mysterious, regularly spaced radio signals from quasars in deep outer space. By analyzing these signals simultaneously from several radio telescopes as the Earth rotated, the distances between the radio telescopes could be determined to great precision, even though they were hundreds of miles apart. And these distances changed over time. Using a technique called Very Long Baseline Interferometry (VLBI), NASA was able to determine the motion of radio telescopes on one side of the San Andreas Fault with respect to telescopes on the other side. These motions confirmed Savage’s observations, even though the radio telescopes being used were hundreds of miles away from the San Andreas Fault.

Length of baseline ceased to be a problem, and the motion of a radio telescope at Vandenberg Air Force Base could be compared to a telescope in the Mojave Desert, in northeastern California, in Hawaii, in Japan, in Texas, or in Massachusetts. Using VLBI, NASA scientists were able to show that the motions of plate tectonics measured over time spans of hundreds of thousands of years are at the same rate as motions measured for only a few years—plate tectonics in rea- time.

But there were not enough radio telescopes to equal Savage’s dense network of survey stations across the San Andreas Fault. Again, the solution came from space, this time from a group (called a constellation) of NAVSTAR satellites that orbit the Earth at an altitude of about twelve thousand miles. This developed into the Global Positioning System, or GPS.

GPS was developed by the military so that smart bombs could zero in on individual buildings in Baghdad or Belgrade, but low-cost GPS receivers allow hunters to locate themselves in the mountains and fishing boats to be located at sea. You can install one on the dashboard of your car to find where you are in a strange city. GPS is now widely used in routine surveying. In tectonic geodesy, it doesn’t really matter where we are exactly, but only where we are relative to the last time we measured. This allows us to measure small changes of only a fraction of an inch, which is sufficient to measure strain accumulation. Uncertainties about variations in the troposphere high above the Earth mean that GPS does much better at measuring horizontal changes than it does vertical changes; leveling using GPS is less accurate than leveling based on ground surveys.

NASA’s Jet Propulsion Lab at Pasadena, together with scientists at Caltech and MIT, began a series of survey campaigns in southern California in the late 1980s, and they confirmed the earlier ground-based and VLBI measurements. GPS campaigns could be done quickly and inexpensively, and, like VLBI, it was not necessary to see between two adjacent ground survey points. It was only necessary for all stations to lock onto one or more of the orbiting NAVSTAR satellites.

In addition to measuring the long-term accumulation of elastic strain, GPS was able to measure the release of strain in the 1992 Landers Earthquake in the Mojave Desert and the 1994 Northridge Earthquake in the San Fernando Valley. The survey network around the San Fernando Valley was dense enough that GPS could determine the size and orientation of the source fault plane and the amount of displacement during the earthquake. This determination of magnitude was independent of the fault source measurements made using seismography or geology.

In addition to measuring the long-term accumulation of elastic strain, GPS was able to measure the release of strain in the 1992 Landers Earthquake in the Mojave Desert and the 1994 Northridge Earthquake in the San Fernando Valley. The survey network around the San Fernando Valley was dense enough that GPS could determine the size and orientation of the source fault plane and the amount of displacement during the earthquake. This determination of magnitude was independent of the fault source measurements made using seismography or geology.

By the time of the Landers Earthquake, tectonic geodesists recognized that campaign-style GPS, in which teams of geodesists went to the field several times a year to remeasure their ground survey points, was not enough. The measurements needed to be more frequent, and the time of occupation of individual sites needed to be longer, to increase the level of confidence in measured tectonic changes and to look for possible short-term geodetic changes that might precede an earthquake. So permanent GPS receivers were installed at critical localities that were shown to be stable against other types of ground motion unrelated to tectonics, such as ground slumping or freeze-thaw. The permanent network was not dense enough to provide an accurate measure of either the Landers or the Northridge earthquake, but the changes they recorded showed great promise for the future.

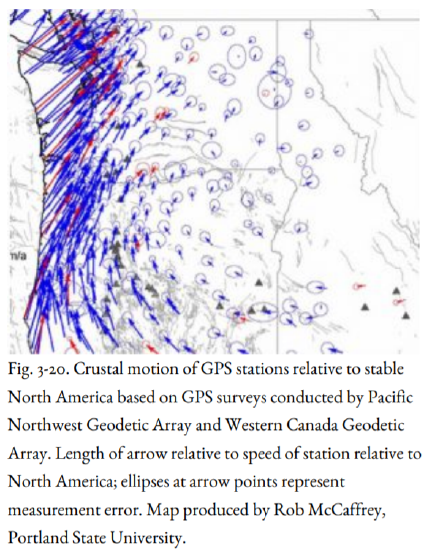

After Northridge, geodetic networks were established in southern California, the San Francisco Bay Area, and the Great Basin area including Nevada and Utah. In the Pacific Northwest, a group of scientists including Herb Dragert of the Pacific Geoscience Centre in Sidney, B.C., and Meghan Miller, then of Central Washington University in Ellensburg, organized networks for the Pacific Northwest called the Pacific Northwest Geodetic Array (PANGA) and Western Canada Deformation Array, building on the ongoing work of Jim Savage and his colleagues at the USGS. Figure 3-19 shows a GPS receiver being used to measure coseismic deformation within the PANGA network, in this case in Olympic National Park, whereas the early GPS studies in the Northwest were done under the direction of Jim Savage. The permanent arrays are still augmented by GPS campaigns to obtain more dense coverage than can be obtained with permanent stations. The PANGA array shows that the deformation of the North American crust is relatively complicated, including clockwise rotation about an imaginary point in northeasternmost Oregon and north-south squeezing of crust in the Puget Sound (Figure 3-20). This clockwise rotation was not recognized prior to GPS, but it is clearly an important first-order feature.

The Landers Earthquake produced one more surprise from space. A European satellite had been obtaining radar images of the Mojave Desert before the earthquake, and it took more images afterward. Radar images are like regular aerial photographs, except that the image is based on sound waves rather than light waves. Using a computer, the before and after images were laid on top of each other, and where the ground had moved during the earthquake, it revealed a striped pattern, called an interferogram. The displacement patterns close to the rupture and in mountainous terrain were too complex to be seen, but farther away, the radar interferometry patterns were simpler, revealing the amount of deformation of the crust away from the surface rupture. The displacements matched the point displacements measured by GPS, and as with GPS, radar interferometry provided still another independent method to measure the displacement produced by the earthquake. The technique was even able to show the deformation pattern of some of the larger Landers aftershocks. Interferograms were created for the Northridge and Hector Mine earthquakes; they have even been used to measure the slow accumulation of tectonic strain east of San Francisco Bay and the swelling of the ground above rising magma near the South Sister volcano in Oregon.